About Me 👨🔬

I am currently a Assistant Professor at the Guangdong Laboratory of Artificial Intelligence and Digital Economy (SZ), affiliated with Shenzhen University.

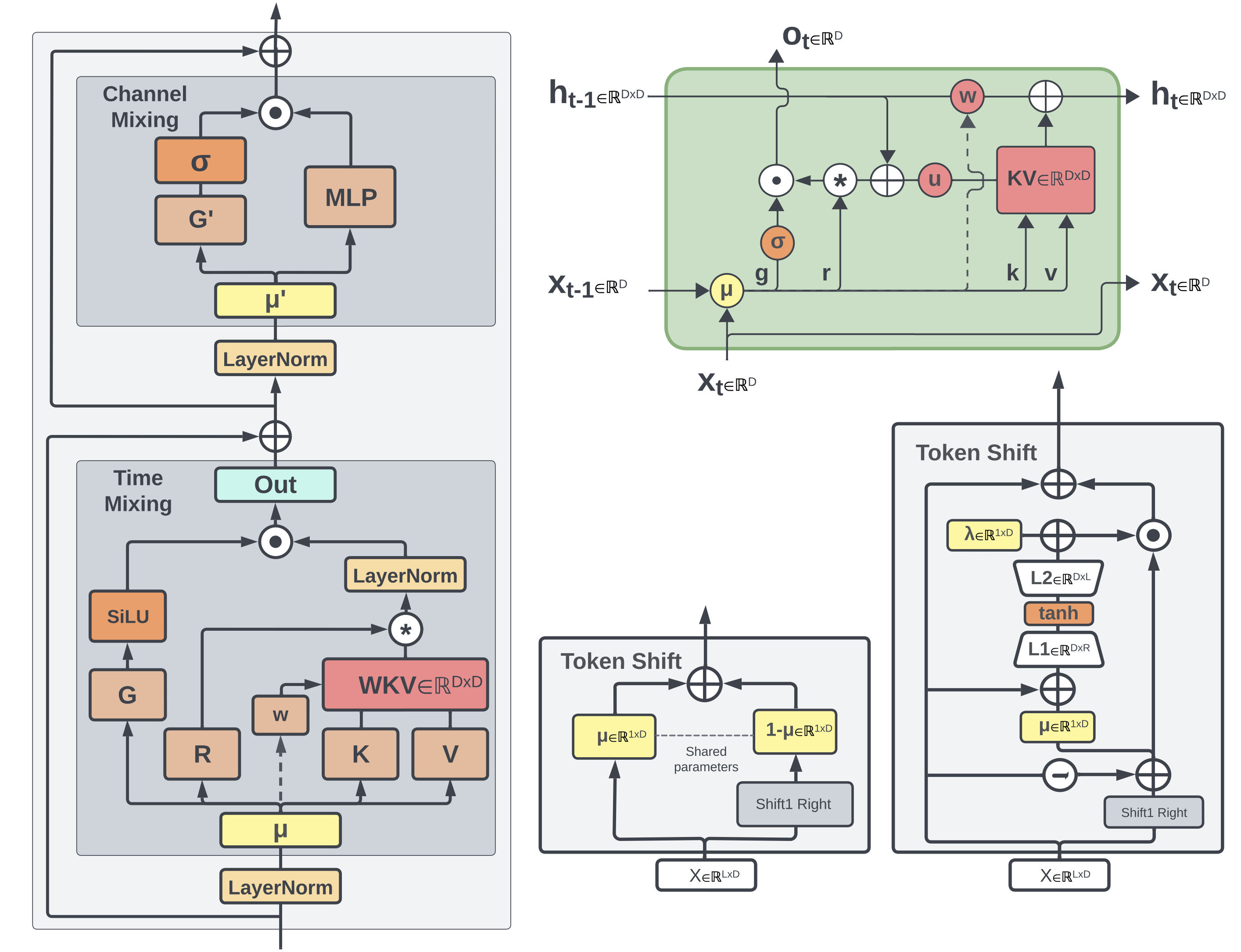

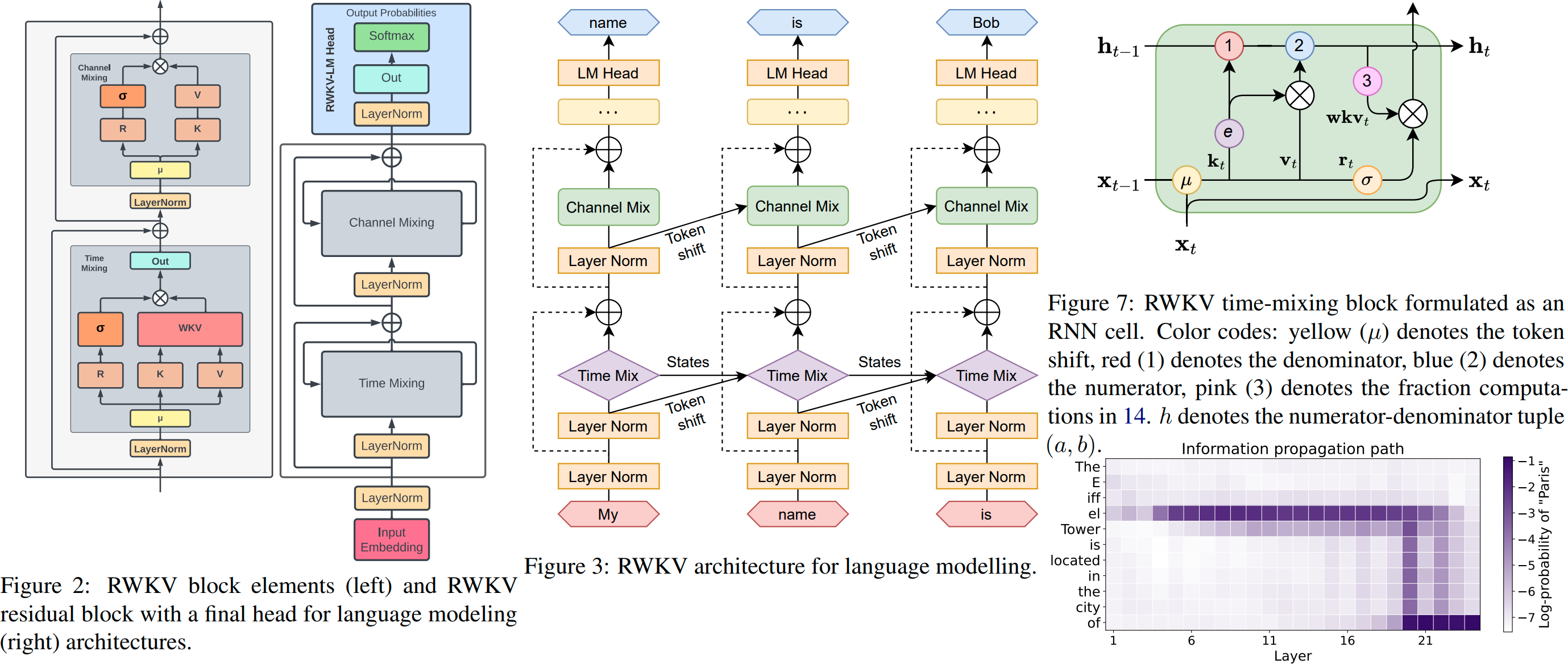

My current research interests🔬 focus on RWKV, a efficient linear attention architecture for language modeling.

My work has been published at leading AI venues, including EMNLP, COLING, COLM, and AAAI, with 20+ papers and 1,700+ citations.

Work Experience

- 2023.08 - Now, Guangdong Laboratory of Artificial Intelligence and Digital Economy (SZ), Assistant Professor

- 2017.08 - 2022.11, Tencent Machine Learning Platform (Now HunYuan Team), Senior Research Scientist

Educations 📖

- 2013.01 - 2017.05, Ph.D., National University of Singapore, Singapore.

- 2008.08 - 2012.07, B.Eng., Harbin Institute of Technology, Harbin.

News 🔥

- 2026.02: 🎉 SceneRAG is accepted by ICASSP 2026

- 2025.12: 🎉 VisualRWKV-HM is accepted by Information Fusion (IF=15.5)

- 2025.11: 🎉 PSPO is accepted by AAAI 26

- 2025.03: 🎉 RWKV-UI is accepted by ICME 2025

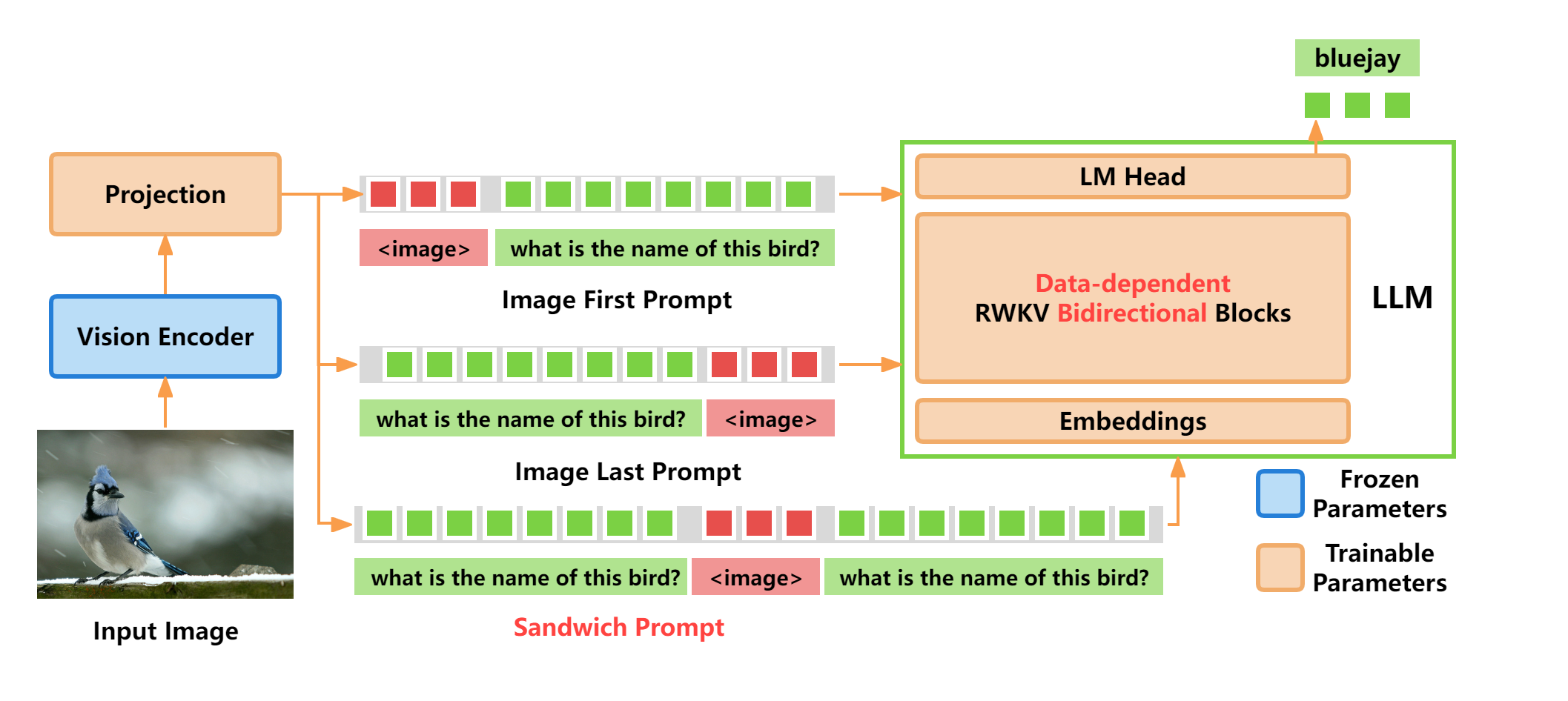

- 2024.12: 🎉 VisualRWKV is accepted by COLING 2025, check out the chinese introduction video on Bilibili 🎬

Selected Projects 🏭

2026 Large-Scale LLM Pre-Training, Mid-Training, and Multimodal Training with RWKV

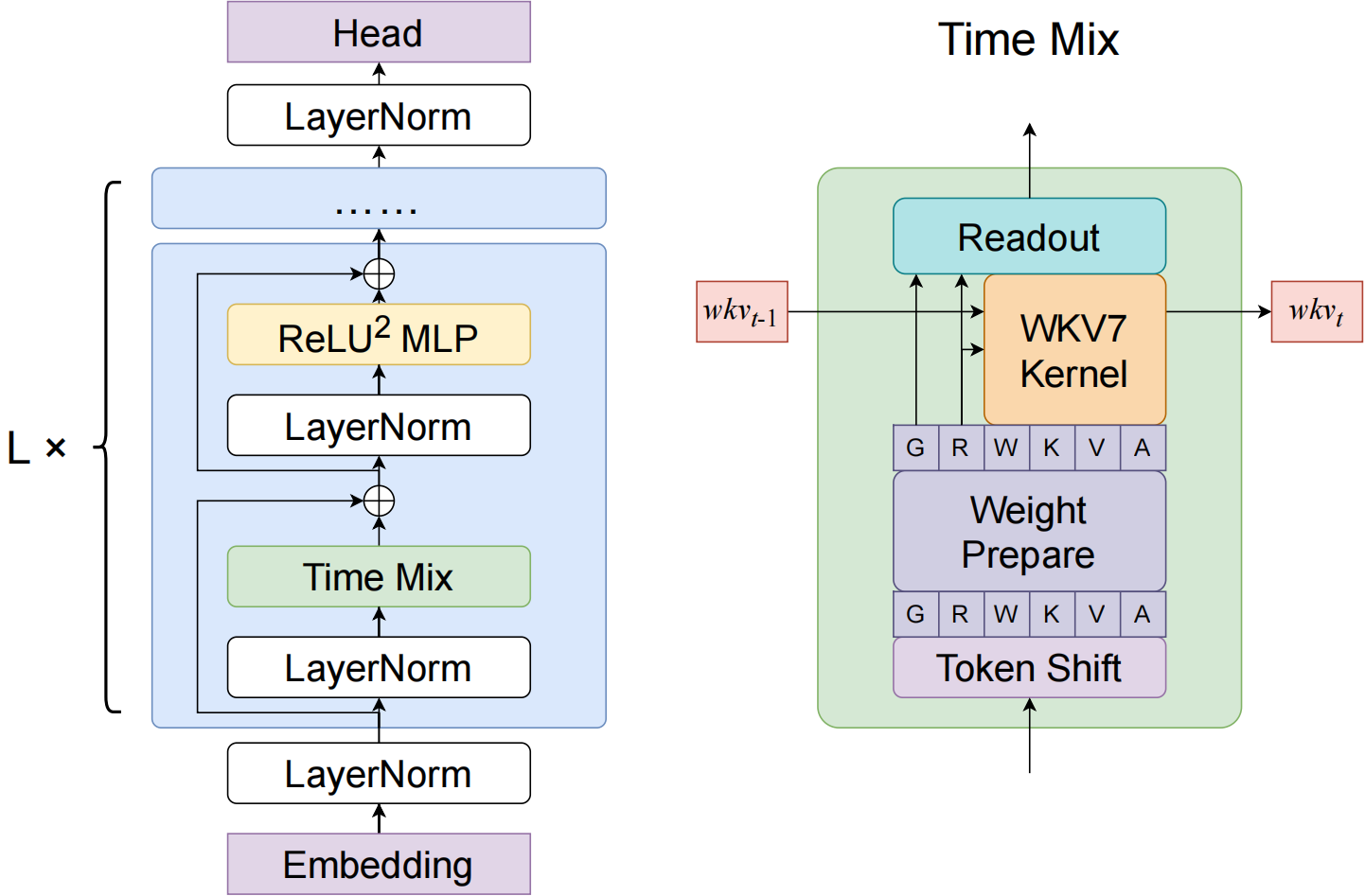

- Served as a core technical leader in the development of next-generation large language model architectures in the RWKV series, including linear-attention models (RWKV-4/5/6/7), hybrid-attention models (RWKV-X), and vision-language models (VisualRWKV).

- Made major contributions across the full training pipeline, including large-scale pre-training, mid-training, and multimodal training, with primary ownership of the multimodal training direction.

- Led or co-led key efforts in scaling models beyond 10B parameters and training on approximately 8T tokens, covering architecture innovation, training methodology, and large-scale system optimization.

- Advanced a novel architecture that unifies Transformer-style parallelizable training with RNN-style efficient inference, making RWKV a strong candidate for future foundation model and AGI-oriented architectures.

2026 LLM Post-Training Optimization with PSPO (Accepted by AAAI-26)

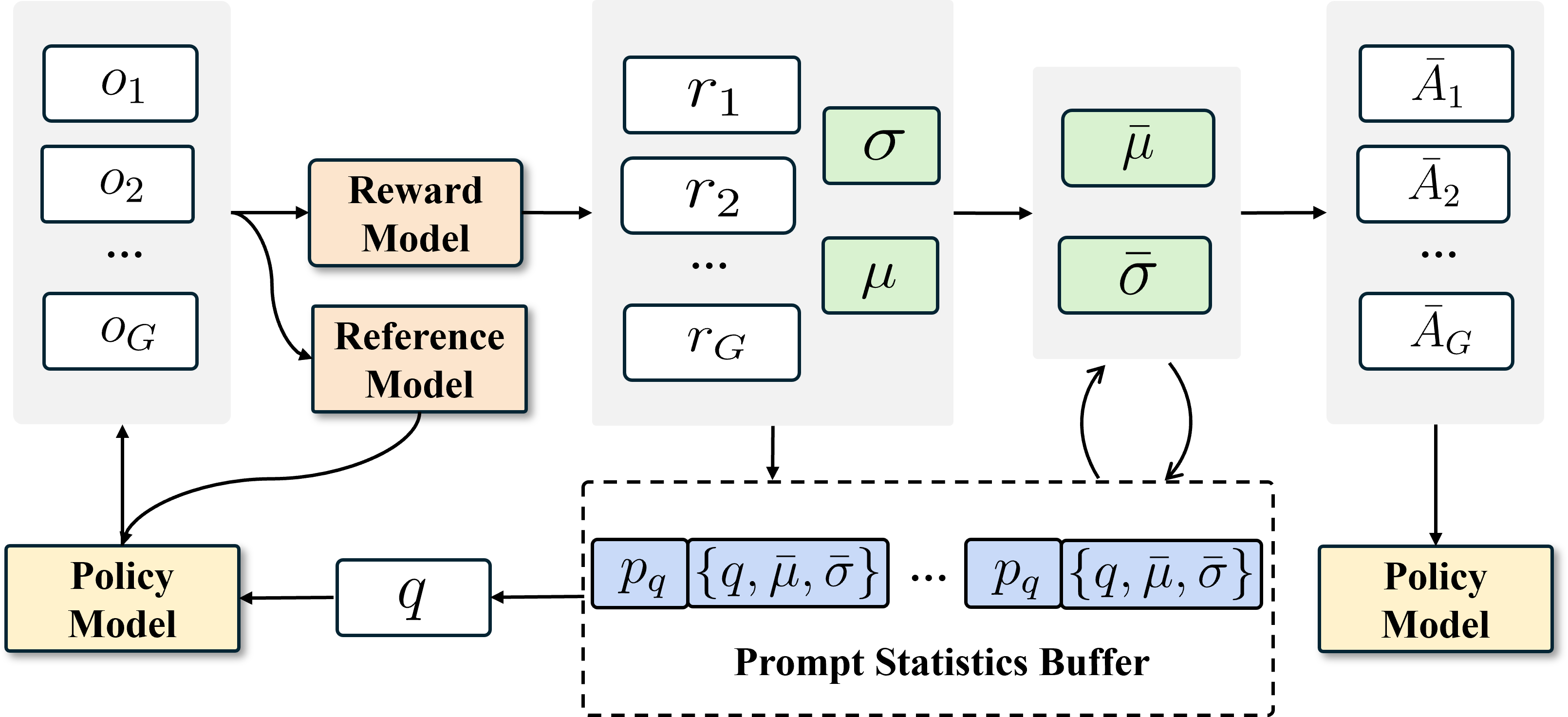

- Led/participated in the design of an efficient reinforcement fine-tuning method for large language models, addressing training instability and poor data utilization in GRPO-based post-training.

- Introduced EMA-based reward smoothing and priority-based prompt sampling, improving the stability of policy updates and increasing the reuse value of high-impact prompts without storing full trajectories.

- Boosted training effectiveness over GRPO baselines with up to 5%+ absolute improvement in some settings, while also improving convergence speed.

- Open-sourced the project and validated it on Qwen2.5-Math-1.5B/7B and other model variants across multiple mathematical reasoning benchmarks, including AIME24, MATH500, AMC23, Minerva Math, and OlympiadBench.

Selected Publications 📝

PSPO: Prompt-Level Prioritization and Experience-Weighted Smoothing for

Efficient Policy Optimization

Xinxin Zhu, Haowen Hou, Ruichong Zhang, Nianbo Zeng, Yulin Peng, Jiongfeng Fang, Fei Yu, Ying Tiffany He

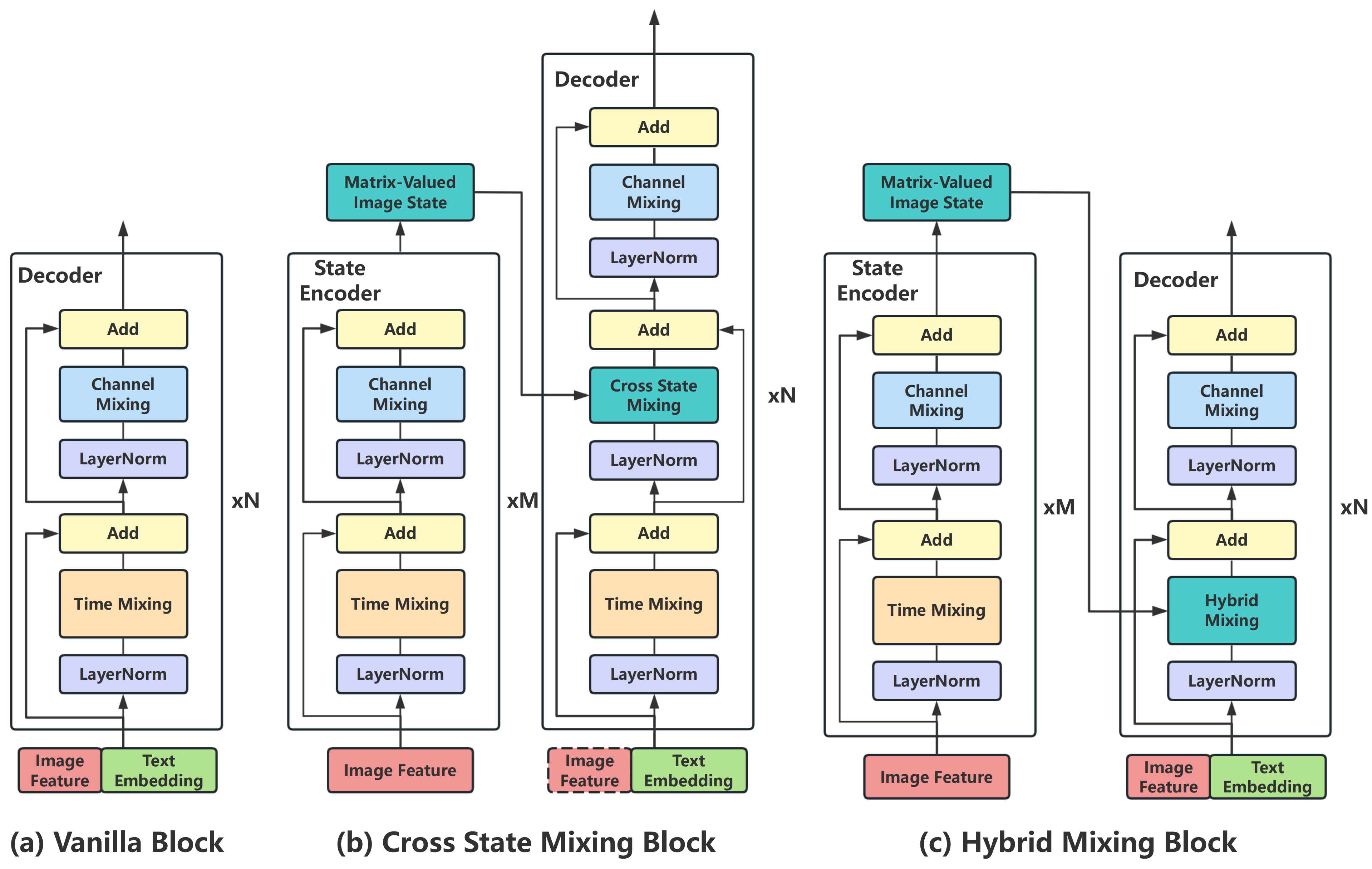

VisualRWKV-HM: Enhancing linear visual-language models via hybrid mixing

Haowen Hou, Fei Ma, Zihang Li, Fei Richard Yu

VisualRWKV: Exploring Recurrent Neural Networks for Visual Language Models

Haowen Hou and Peigen Zeng and Fei Ma and Fei Richard Yu

RWKV-7 “Goose” with Expressive Dynamic State Evolution

Bo Peng, Ruichong Zhang, Daniel Goldstein, Eric Alcaide, Haowen Hou,et al.

Paper | HuggingFace | Code

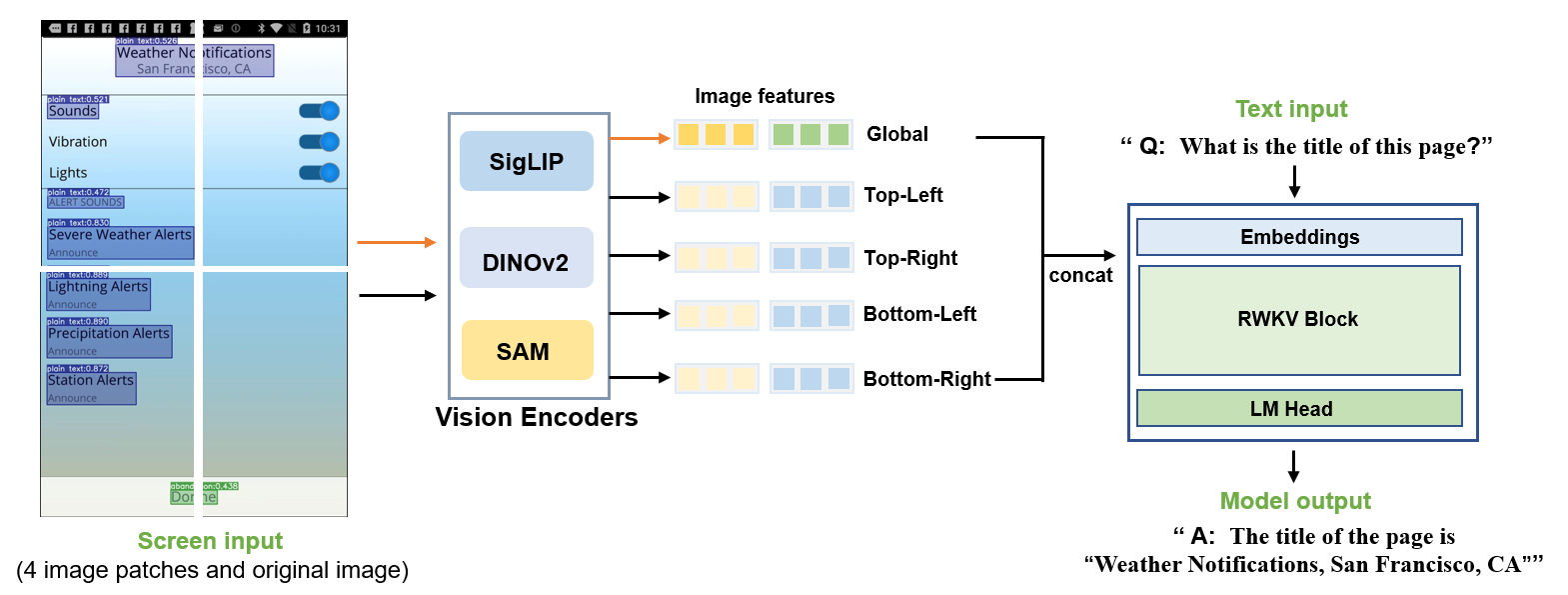

RWKV-UI: UI Understanding with Enhanced Perception and Reasoning

Jiaxi Yang, Haowen Hou

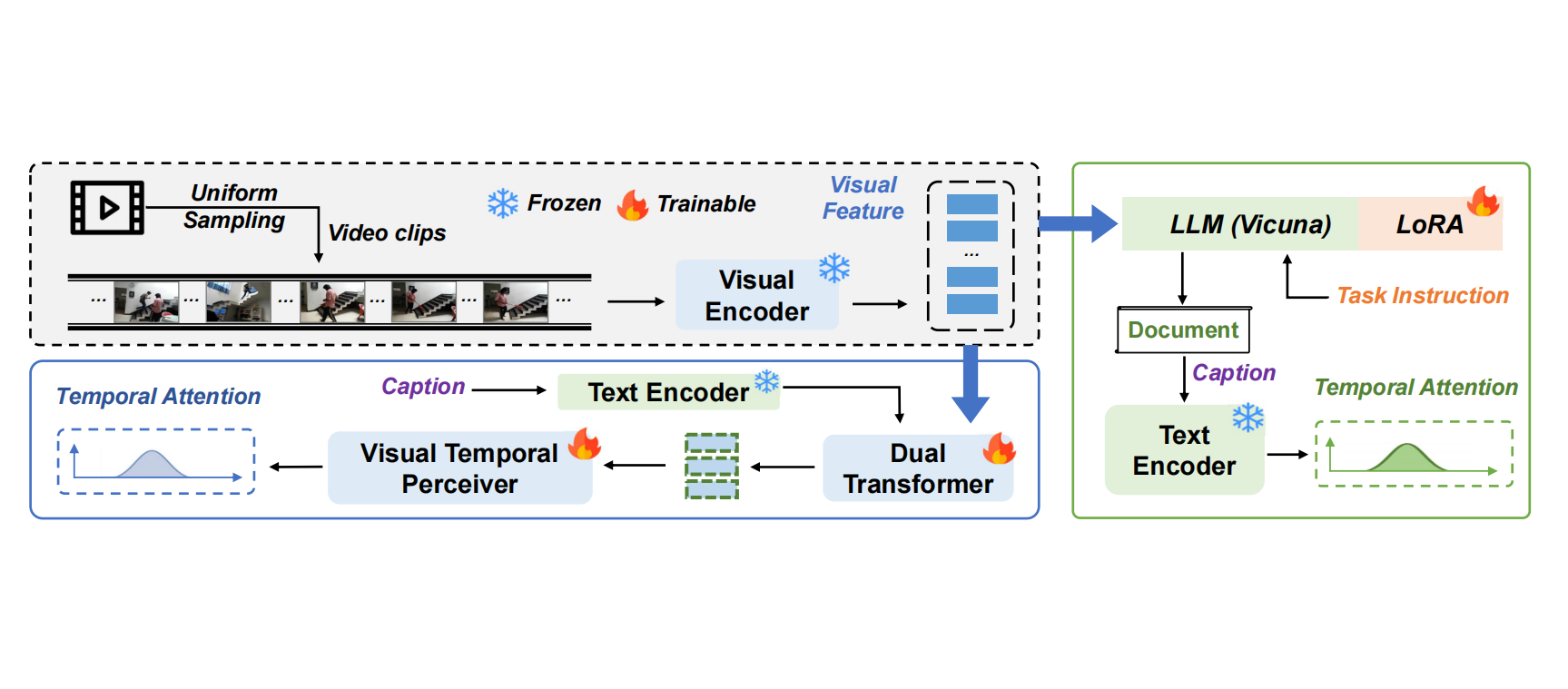

MLLM-TA: Leveraging Multimodal Large Language Models for Precise Temporal Video Grounding

Yi Liu, Haowen Hou(co-first author), Fei Ma, Shiguang Ni, Fei Richard Yu

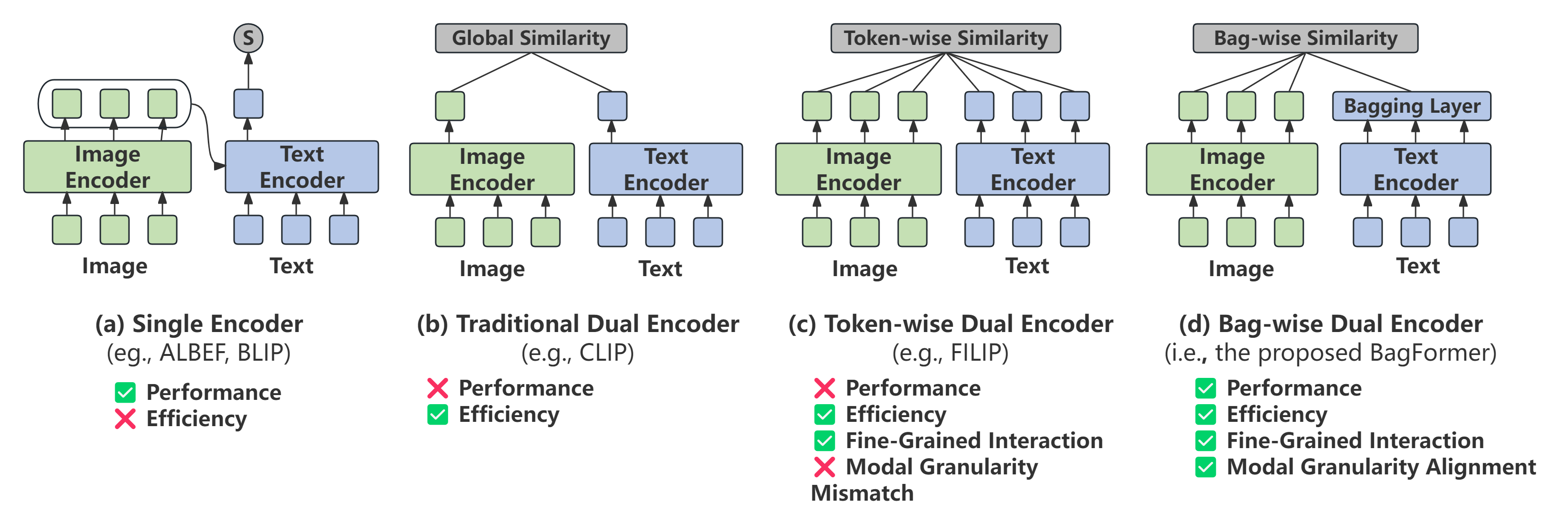

BagFormer: Better cross-modal retrieval via bag-wise interaction

Haowen Hou, Xiaopeng Yan, Yigeng Zhang

Eagle and Finch: RWKV with Matrix-Valued States and Dynamic Recurrence

Bo Peng, Eric Alcaide, Quentin Anthony, …, Haowen Hou, et al.

Paper | HuggingFace | Code

RWKV: Reinventing RNNs for the Transformer Era

Bo Peng, Eric Alcaide, Quentin Anthony, …, Haowen Hou, et al.

Paper | HuggingFace | Code

Other Publications 📚

arXivEmbeddingRWKV: State-Centric Retrieval with Reusable StatesarXivRWKV-X: A Linear Complexity Hybrid Language ModelarXivRWKV-TS: Beyond Traditional Recurrent Neural Network for Time Series Tasks

Honors and Awards 🎖

- 2018, Shenzhen High-Level Overseas Talent

- 2013, NUS SMA3 Scholarship

- 2012, NUS NGS Scholarship

- 2007, First Prize, National Olympiad in Chemistry in Provinces (Top 1%)

Invited Talks 💬

- 2025.03, RWKV: Next-Gen Model Architecture, NVIDIA GTC 2025

- 2024.12, RWKV: Next-Gen Model Architecture, Future Medicine Conference

- 2024.10, RWKV: Next-Gen Model Architecture, China National Conference on Social Media Processing 2024

Teaching 🧑🏫

- 2024 Fall Advanced Algorithms

Academic Service 🎓

- 2024.06 - Now, Journal Reviewer, IEEE Signal Processing Letters

- 2025.01 - Now, Conference Reviewer, IEEE International Conference on Multimedia & Expo (ICME)